dds <- DESeq(dds)estimating size factorsestimating dispersionsgene-wise dispersion estimatesmean-dispersion relationshipfinal dispersion estimatesfitting model and testingA fundamental part of RNA-seq is identifying which genes are more highly expressed in one group of samples compared to another group of samples. This is called differential gene expression (DGE) analysis. Notice that we say we are comparing groups of samples, not comparing individual samples. In science we always need replicates – it is not meaningful to make conclusions based on a single sample or observation.

In order to identify which genes are differentially expressed between two groups (in this case, we have 4 samples that are in the treatment group and 4 samples in the untreated or control group), we need to test if the two groups are significantly different from one another, which can be a challenging question to answer. We can start by specifically asking our question as a statistical question: Are the differences we observe between the two groups greater than the differences we would expect to see by chance?

DESeq2 is a highly-regarded R package for analysing RNA-seq data. DESeq2 uses a negative binomial method to model the count data, and combines this with a generalised linear model (GLM) to identify differentially expressed genes. For more about the DESeq2 package, you can read the original article.

In practice, a lot of the statistical tests and corrections happen ‘behind the scenes’ when performing DGE using software such as DESeq2.

Let’s run the code and then talk about what’s happening:

dds <- DESeq(dds)estimating size factorsestimating dispersionsgene-wise dispersion estimatesmean-dispersion relationshipfinal dispersion estimatesfitting model and testingWe get a few print out messages as we run this code. What do these outputs mean?

An in-depth knowledge of what is happening here is not important for today, but some explanations below are provided for the curious!

Estimating size factors: assesses library size and calculates factors to adjust for differences between samples. This also adjusts for compositional differences (e.g., if gene X in sample 1 takes up a very large proportion of all available reads, other genes will have correspondingly fewer genes. If this effect is not uniform across samples, it can be corrected for during this stage).

Dispersion: adjusting for heteroscedasticity. DESeq2 makes use of variability estimates from not just one gene, but from all genes to make estimates about overall levels of variance. By bringing in (or “borrowing”) information from other genes, DESeq2 compensates for a small number of samples (which can lead to artificially small variance estimates otherwise).

Fitting model and testing: fitting the generalised linear model (GLM) and identifying differentially expressed genes.

As you may start to be seeing, using the correct statistical assumptions, analyses and corrections are important in RNA-seq. We will not be getting in to it in any more detail, but some extra info is available for the curious:

The statistical approach to this question is to begin with the null hypothesis (that there is no difference between the two groups) and test whether or not you can reject the null.

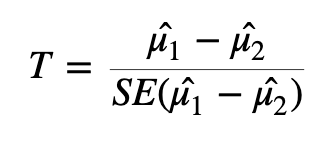

To test whether or not we can reject the null hypothesis we can calculate a test statistic:

Here we are taking the difference between the means of the two groups and then dividing that difference by some measure of variability - in this case, dividing by the standard error.

If the difference in means is LARGE relative to the variance, the test statistic will be large (indicating significance). If the difference between the means is small relative to the variance, the test statistic will be small, indicating the difference is probably not significant (i.e., the difference we observe is in-line with the variance we observe).

Once we have a test statistic we will calculate a p-value. The p-value is an indication of how likely we were to observe the given difference in means (or a more extreme difference) if there is truly no difference between the means. That is, how likely are we to see this difference due to random chance?

How do we interpret the p-value? We will specify a threshold (usually 0.05), and say that if a p-value is less than this threshold we will consider it a significant result. If the p-value is lower than the threshold we set, we will reject the null hypothesis (that the groups are identical) and accept the alternative (that there is a difference between the groups). The threshold we set is our level of “risk” that this event happened by chance alone.

When we declare that a result with a p-value of less than 0.05 is significant, we are saying that we believe the difference to be true since, if there was truly no difference, such a result would happen less than 5% of the time.

A useful mental analogy is to consider flipping a coin. We know that for a fair coin, the odds of getting heads is 50:50. Still, if we get four heads in a row it doesn’t worry us - it’s entirely plausible given the variation we expect. But if we get 50 heads in a row, while we know it’s statistically possible, we know it’s very unlikely to see such an extreme result. If we got to 100 heads in a row, we might instead start to question the fairness of the coin - we would reject the null hypothesis that the odds of heads and tails is identical.

It’s important to think about the two possible ways in which we could be wrong when testing a hypothesis like this: we could generate a false positive or a false negative.

A false positive or Type I error is when we reject the null hypothesis when there is truly no significant difference.

A false negative or Type II error is when we fail to reject the null hypothesis when there truly is a significant difference.

When we select a p-value threshold of 0.05, we are accepting the fact that 5% of the time that the null hypothesis is true, we will reject it. This becomes hugely problematic when you are testing thousands of genes! In order to avoid a large number of false positives we must correct for multiple testing. The more tests we are doing, the more stringent we need to be. We will not cover multiple testing corrections in depth but will briefly mention two types:

Family-wise Error Rate (FWER), also called the Bonferroni and Holm corrections, is a highly stringent procedure. This approach will give the minimal possible number of false positives, but will miss some true positives. Good if false positives are particularly costly (e.g., if you are providing someone with a severe medical diagnosis).

False Discovery Rate control (FDR), also called the Benjamini and Hochberg correction, is less conservative than FWER. This approach will identify more significant events, but expect a greater number of false positives. Use this approach if you are more concerned about missing something valuable and can afford a few false positives.

Many biological experiments struggle with getting enough samples for statistical significance. In RNA-seq experiments it is common to see groups of three samples or replicates. This is especially problematic when using the t-test (or similar procedures that involve variance). When testing for differences in gene expression it is possible to encounter genes with a small difference in the mean between the two groups and, due to the small sample size, a very small level of variation (a small standard error). A small difference in the means divided by a very small standard error translates to a large test statistic, which is then translated to a small p-value and what looks like a highly significant result.

Since this issue is caused by an artificially low standard error due to low sample numbers, a number of methods have proposed artificially increasing the standard error in some way. One way to implement this is through Shrinkage Estimation, which involves using Empirical Bayes methods to adjust individual test statistics based on the overall distribution of variances. During shrinkage estimation, small standard errors are made larger while large standard errors are made smaller.

TL;DR:

DESeq2 automatically applies the corrections we need for these data. The “standard” P-value of 0.05 is not sufficient – 5% is too many genes that will be false positives (i.e., genes that look like they are DE, but they are not). We need to use a correction - usually FDR. DESeq2 automatically applies this correction. We must correct for heteroscedasticity, i.e., cases where variance is dependent on the mean, which DESeq2 also automatically does.

The DESeq2 package requires a specific data storage object called a “DESeq Data Set” or DDS object. The DDS object contains not just the count data, but also the design matrix and the metadata. Once we have created the dds object, we can view the data stored within using the counts function, which we will pipe into head to only return the first 6 rows.

counts(dds) |> head() S1-Un S2-Tr S3-Un S4-Tr S5-Un S6-Tr S7-Un S8-Tr

ENSG00000000003 679 448 873 408 1138 1047 770 572

ENSG00000000005 0 0 0 0 0 0 0 0

ENSG00000000419 467 515 621 365 587 799 417 508

ENSG00000000457 260 211 263 164 245 331 233 229

ENSG00000000460 60 55 40 35 78 63 76 60

ENSG00000000938 0 0 2 0 1 0 0 0We will now create a new object, results, to store the results in. We can access those results using either the head function or the summary function, which will give us slightly different information - both are valid and useful ways of familiarising yourself with the data.

results <- DESeq2::results(dds)

results |> head()log2 fold change (MLE): dex trt vs untrt

Wald test p-value: dex trt vs untrt

DataFrame with 6 rows and 6 columns

baseMean log2FoldChange lfcSE stat pvalue

<numeric> <numeric> <numeric> <numeric> <numeric>

ENSG00000000003 708.602170 -0.3812539 0.100654 -3.787751 0.000152017

ENSG00000000005 0.000000 NA NA NA NA

ENSG00000000419 520.297901 0.2068127 0.112219 1.842944 0.065337210

ENSG00000000457 237.163037 0.0379206 0.143445 0.264357 0.791504963

ENSG00000000460 57.932633 -0.0881677 0.287142 -0.307053 0.758803336

ENSG00000000938 0.318098 -1.3782340 3.499875 -0.393795 0.693732273

padj

<numeric>

ENSG00000000003 0.00128293

ENSG00000000005 NA

ENSG00000000419 0.19646960

ENSG00000000457 0.91141814

ENSG00000000460 0.89500645

ENSG00000000938 NAThe results object contains information for all genes tested. It is practical to create a new object that contains only the genes we consider differentially expressed based on the thresholds (p-value, logFC) and methods (e.g., multiple testing adjustment) that suit our situation.

We will remove any rows that have NAs in the results object, then pull out only those with an adjusted p-value less than 0.05.

results <- na.omit(results)

# Keep all rows in the res object if the adjusted p-value < 0.05 and log2FC more than 1 or -1.

resultsPadjLogFC <- results[results$padj <= 0.05 & abs(results$log2FoldChange) > 1,]

resultsPadjLogFC |> dim()[1] 1011 6resultsPadjLogFC |> head()log2 fold change (MLE): dex trt vs untrt

Wald test p-value: dex trt vs untrt

DataFrame with 6 rows and 6 columns

baseMean log2FoldChange lfcSE stat pvalue

<numeric> <numeric> <numeric> <numeric> <numeric>

ENSG00000003402 2546.6142 1.19043 0.120621 9.86918 5.66250e-23

ENSG00000003987 25.5043 1.00481 0.372657 2.69633 7.01081e-03

ENSG00000004799 914.3790 2.64493 0.628565 4.20789 2.57766e-05

ENSG00000004846 17.9992 -1.69783 0.557866 -3.04345 2.33886e-03

ENSG00000005471 33.6640 -1.12005 0.357469 -3.13328 1.72862e-03

ENSG00000006283 62.9603 -1.46691 0.286729 -5.11601 3.12068e-07

padj

<numeric>

ENSG00000003402 4.81259e-21

ENSG00000003987 3.45611e-02

ENSG00000004799 2.63140e-04

ENSG00000004846 1.37943e-02

ENSG00000005471 1.06265e-02

ENSG00000006283 4.68179e-06library(ggplot2)

library(ggiraph)

library(plotly)

# Define significance thresholds

pval_threshold <- 0.05

fc_threshold <- 1 # log2 fold change threshold

# Create a data frame for plotting

plot_data <- data.frame(

logFC = results$log2FoldChange,

negLogPval = -log10(results$pvalue),

adj.P.Val = results$padj,

ID = rownames(results) # Assuming row names are gene IDs

)

# Add a column to categorize genes

plot_data$category <- ifelse(plot_data$adj.P.Val <= pval_threshold,

ifelse(plot_data$logFC >= fc_threshold, "Upregulated",

ifelse(plot_data$logFC <= -fc_threshold, "Downregulated", "Passes P-value cut off")),

"Not Significant")

# Create the ggplot object

p <- ggplot(plot_data, aes(x = logFC, y = negLogPval, color = category, text = ID)) +

geom_point(alpha = 0.6, size = 2) +

scale_color_manual(values = c("Upregulated" = "red", "Downregulated" = "blue", "Not Significant" = "grey20", "Passes P-value cut off" = "grey")) +

geom_vline(xintercept = c(-fc_threshold, fc_threshold), linetype = "dashed") +

geom_hline(yintercept = -log10(pval_threshold), linetype = "dashed") +

labs(

title = "Interactive Volcano Plot of Differential Gene Expression",

subtitle = paste("Thresholds: |log2FC| >", fc_threshold, "and adjusted p-value <", pval_threshold),

x = "log2 Fold Change",

y = "-log10(p-value)",

color = "Differential Expression"

) +

theme_minimal() +

theme(

legend.position = "right",

plot.title = element_text(hjust = 0.5, size = 16),

plot.subtitle = element_text(hjust = 0.5, size = 12)

)

# Convert ggplot to an interactive plotly object

interactive_plot <- ggplotly(p, tooltip = c("text", "x", "y", "color"))

# Customize hover text

interactive_plot <- interactive_plot %>%

layout(hoverlabel = list(bgcolor = "white"),

hovermode = "closest")

# Display the interactive plot

interactive_plot

We can see that there are a couple of genes that are quite highly up and down regulated. Let’s pick a few of these to explore further.

First, we created a new data frame that contains only the genes that are significantly differentially expressed based on our thresholds for adjusted p-value and log fold change (resultsPadjLogFC). We then created two new data frames, one for upregulated genes and one for downregulated genes, and sorted them by log fold change.

# create upregulated genes only dataframe

resultsUpReg <- as.data.frame(resultsPadjLogFC[resultsPadjLogFC$log2FoldChange>=0,]) |>

arrange(desc(log2FoldChange))

# create downregulated genes only dataframe

resultsDownReg <- as.data.frame(resultsPadjLogFC[resultsPadjLogFC$log2FoldChange<=0,]) |>

arrange(log2FoldChange)Now have a look at the first few rows of the upregulated and downregulated genes. You can see the most highly expressed genes are at the top of each table (looking at the log2FoldChange column).

head(resultsUpReg) baseMean log2FoldChange lfcSE stat pvalue

ENSG00000179593 67.243048 9.505972 1.0545022 9.014654 1.974931e-19

ENSG00000109906 385.071029 7.352628 0.5363902 13.707610 9.141988e-43

ENSG00000250978 56.318194 6.327384 0.6777974 9.335214 1.007873e-20

ENSG00000132518 5.654654 5.885112 1.3240432 4.444803 8.797236e-06

ENSG00000127954 286.384119 5.207160 0.4930828 10.560419 4.546302e-26

ENSG00000249364 8.839061 5.098107 1.1596139 4.396383 1.100695e-05

padj

ENSG00000179593 1.252968e-17

ENSG00000109906 2.256443e-40

ENSG00000250978 7.206290e-19

ENSG00000132518 1.000054e-04

ENSG00000127954 5.056498e-24

ENSG00000249364 1.224217e-04head(resultsDownReg) baseMean log2FoldChange lfcSE stat pvalue

ENSG00000128285 6.624741 -5.325905 1.2578165 -4.234247 2.293191e-05

ENSG00000267339 26.233573 -4.611550 0.6730945 -6.851268 7.319839e-12

ENSG00000019186 14.087605 -4.325907 0.8577678 -5.043214 4.577761e-07

ENSG00000183454 5.804171 -4.264077 1.1668773 -3.654264 2.579211e-04

ENSG00000146006 46.807597 -4.211850 0.5288519 -7.964139 1.663786e-15

ENSG00000141469 53.436528 -4.124784 1.1297977 -3.650905 2.613174e-04

padj

ENSG00000128285 2.378741e-04

ENSG00000267339 2.051149e-10

ENSG00000019186 6.613434e-06

ENSG00000183454 2.049951e-03

ENSG00000146006 7.152002e-14

ENSG00000141469 2.072367e-03These tables now show our top upregulated and downregulated genes in order, which we can explore further. The gene IDs shown are from the Ensembl database, which we can search online to find the gene name and function. For the next lesson, we are going to focus on one gene, which is the most highly expressed gene in our dexamethasone treated samples (i.e., gene ENSG00000179593 from the upregulated table).